Azure AI Fundamentals (AI-900)

- 236 exam-style questions

- Detailed explanations and references

- Simulation and custom modes

- Custom exam settings to drill down into specific topics

- 180-day access period

- Pass or money back guarantee

What is in the package

We've helped thousands of candidates prepare for Microsoft certification exams. We understand how the AI-900 is structured, what Microsoft is testing, and just as important, how the questions are designed to challenge you.

Every question in our bank matches the real exam in phrasing, difficulty, and the way wrong answers are presented. The incorrect options aren't random. On the real exam, they're chosen to catch specific misunderstandings, and ours work the same way. Reading the explanation for a wrong answer you picked is often more helpful than simply getting the question right.

We reference official Microsoft documentation for every question, so you're building knowledge that lasts. This way, you won't just memorize patterns to pass, but actually understand the material for real-world use.

Complete AI-900 domains coverage

The domain weightings on paper don't tell you where the real difficulty lives. Here's what our practice questions prepare you for in each domain.

Describing Artificial Intelligence Workloads and Considerations

This looks like the easy domain — until the exam puts you in a scenario and asks you to distinguish between fairness and transparency, or identify a workload that combines computer vision and NLP in a single system. Our questions are built around exactly these traps. By the time you sit the real exam, you'll have seen every variation of a responsible AI scenario and know how to tell the principles apart in context, not just by definition.

Describing Fundamental Principles of Machine Learning on Azure

This domain sounds technical but isn't — you don't need to build models, you need to know what each type is for and when Azure uses it. Our questions drill the distinctions that the exam tests: supervised vs unsupervised, regression vs classification vs clustering, and the specific purpose of Azure ML designer features like "Explain best model." We cover these until they're second nature.

Describing Features of Computer Vision Workloads on Azure

The exam doesn't ask you to name services — it puts you in a scenario and makes the wrong service look tempting. "Analyze Image" vs "Describe Image" vs "Detect" vs "Verify" are not interchangeable, and we've watched candidates who knew the theory pick the wrong one on the day. Our questions are designed to close that gap, including the evolving ethical constraints on Azure Face service that Microsoft has woven into recent exam scenarios.

Describing Features of Natural Language Processing (NLP) Workloads on Azure

The recurring trap here is ambiguity: key phrase extraction, entity recognition, and summarisation can all look like the right answer when a scenario is loosely worded. We train you to read through the ambiguity by focusing on what each service is specifically designed to do — and what makes it different from its two closest alternatives. Speech vs Translator, Language vs Speech: our questions make sure you know when each one applies.

Describing Features of Generative AI Workloads on Azure

This is the domain that has changed the most in recent exam cycles and the one where outdated materials hurt candidates most. Our coverage is current: Azure OpenAI Service, Azure AI Foundry, RAG, prompt engineering, and model selection (GPT-4, DALL-E, Whisper, Embeddings) are all represented at the depth the exam now tests them. Candidates who underestimate this domain lose their passing margin here — our question bank makes sure you don't.

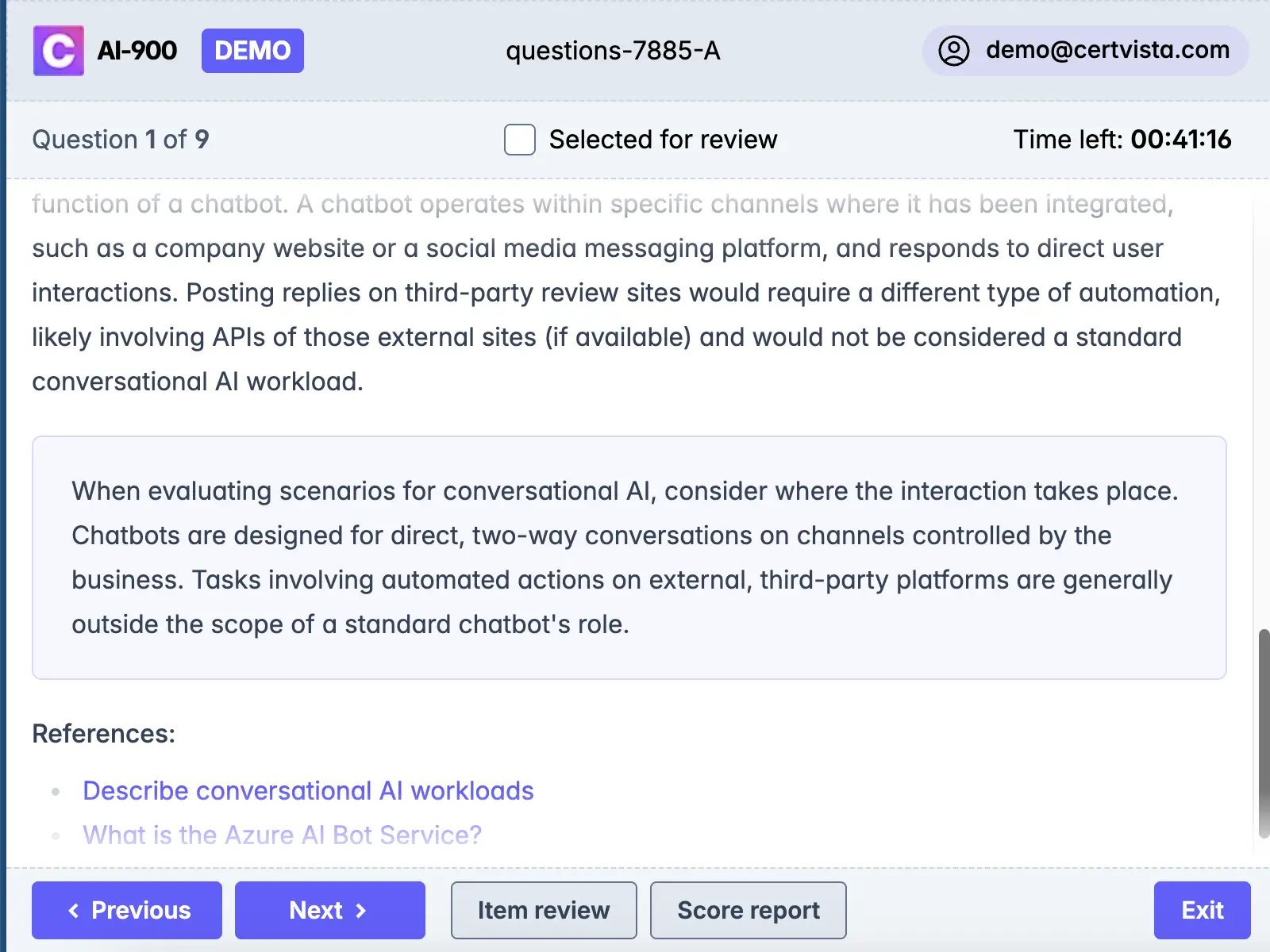

We've designed our practice environment to match the real Microsoft exam interface as closely as possible: the layout, navigation, countdown timer, and question flagging system.

This isn't cosmetic. Candidates who have practised in a familiar environment perform measurably better under exam conditions - not because they know more, but because they're not spending mental energy adapting to an unfamiliar interface while the clock runs down.

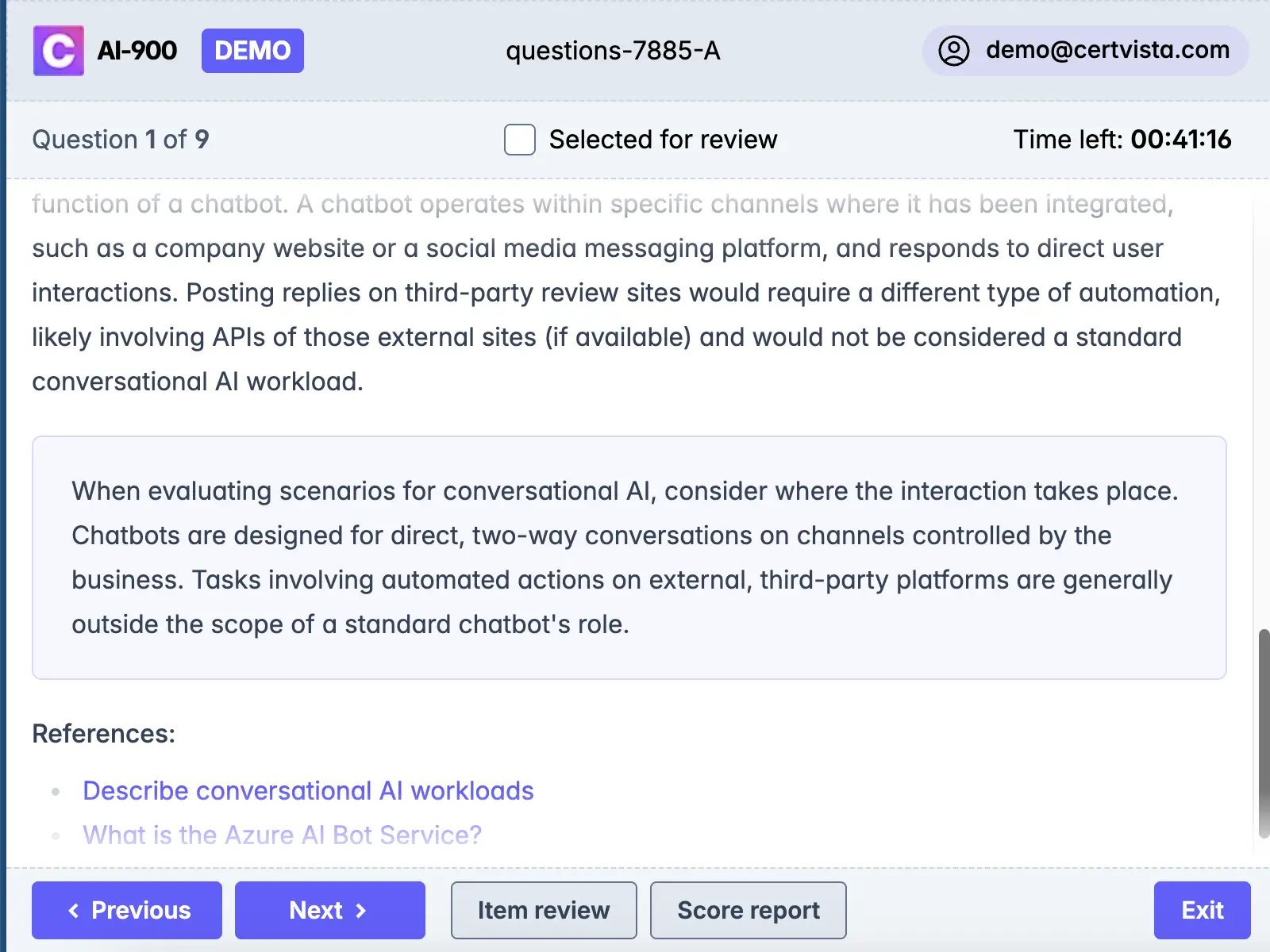

One of the most consistent patterns we see: candidates who practise exclusively with multiple-choice questions freeze when they hit a drag-and-drop or hot-area question on the real exam.

These formats require a different approach — you can't eliminate wrong answers the same way, and in a hot area question, you need to be confident enough in the right answer to click it, not just recognize it.

Our question bank covers every format the AI-900 uses.

There's a difference between an explanation that tells you the right answer and one that teaches you how to think about the question. Ours do the latter.

For every question we explain why each wrong answer is wrong — including the answers that were designed to look right. We explain the underlying concept, flag the common misunderstanding the question is targeting, and include an exam tip based on patterns we've seen across thousands of candidates. This is where the real exam preparation happens, and it's why we tell candidates to spend as much time reviewing correct and incorrect answers as they do taking the practice exam in the first place.

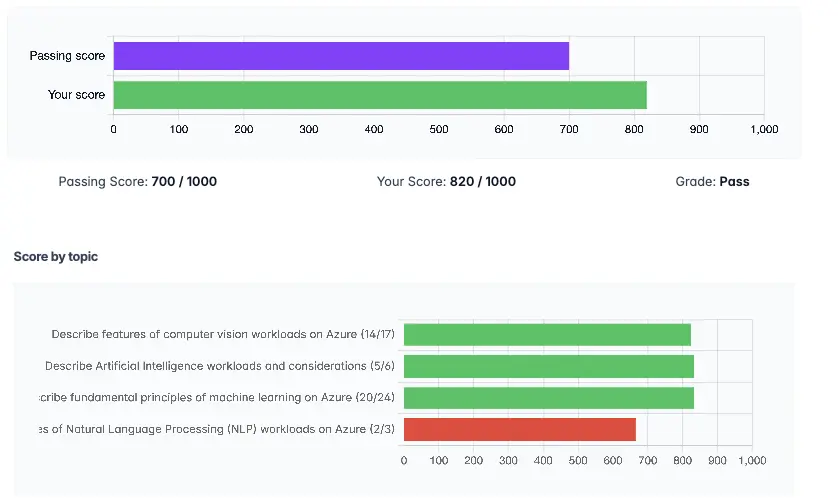

Score totals don't tell you what to do next. Our dashboard breaks your performance down by official exam domain so you can see exactly where to focus your remaining preparation time. If you're scoring 85% on Computer Vision and 55% on Generative AI, you know what to do with your last three days before the exam.

What's in the AI-900 exam

The AI-900 tests foundational knowledge of AI and machine learning concepts, as well as familiarity with the Azure services used to build AI solutions. No coding, no mathematics, no prior Azure experience required.

It's a broader exam than many candidates expect. You're not being asked to go deep on any one topic — you're being asked to demonstrate that you understand the AI landscape well enough to make informed decisions: which service to use, which approach is appropriate, which responsible AI principle applies. That breadth is what makes scenario-based questions the dominant format.

From our experience, it's also a more useful certification than its "fundamentals" label might suggest. The concepts it tests — AI workload types, responsible AI in practice, the trade-offs between different ML approaches — are things you'll actually use if you work with AI in any capacity.

Who should take the AI-900?

We see candidates from a wide range of backgrounds pass this exam. What they have in common isn't a technical background - it's the willingness to engage with the material rather than just memorise it.

The AI-900 is a good fit for IT professionals and developers who want a solid foundation before moving on to role-based certifications like AI-102. It's also helpful for business analysts, project managers, and decision-makers who need to discuss and evaluate AI solutions without relying on a technical team. For career changers and students, it's a great first Microsoft certification because it's achievable, doesn't expire, and leads to a clear progression path.

No prior Azure experience is required. A passing familiarity with cloud concepts helps, but isn't essential.

AI-900 exam format and structure

| Feature | Detail |

|---|---|

| Exam code | AI-900 |

| Number of questions | 40–60 |

| Time limit | 60 minutes |

| Question formats | Multiple choice, drag-and-drop, case studies, hot area |

| Passing score | 700 out of 1000 |

| Cost | ~$99 USD (varies by region) |

| Prerequisites | None |

| Certification validity | Does not expire |

One detail worth understanding: 700/1000 is not a straight 70%. Microsoft uses scaled scoring, which adjusts based on the difficulty of the specific questions you received. In practice this means your scaled score can be higher or lower than your raw percentage would suggest. Don't try to calculate exactly how many questions you can afford to miss — focus on genuine understanding across all domains.

Time management is worth practising. 60 minutes for up to 60 questions is comfortable if you're well prepared, but case study questions take longer than multiple choice. Candidates who haven't practised under timed conditions sometimes run short at the end.

AI-900 domain weightings

| Domain | Weighting |

|---|---|

| Describe AI workloads and considerations | 15–20% |

| Describe fundamental principles of ML on Azure | 15–20% |

| Describe features of computer vision workloads on Azure | 15–20% |

| Describe features of NLP workloads on Azure | 15–20% |

| Describe features of generative AI workloads on Azure | 20–25% |

The equal weighting across the first four domains can be misleading. Generative AI has a higher weighting than any of them individually, and recent exam feedback shows it often separates passing candidates from those who are borderline. Plan your preparation with this in mind.

How difficult is the AI-900?

Honestly, it all comes down to how you prepare. We've seen people with no technical background pass easily on their first try, and we've also seen IT professionals fail because they thought their experience alone would be enough without structured preparation.

The AI-900 is accessible, but it's not something you can just check off. Because the exam uses scenarios, you can't just rely on recognizing service names. Microsoft has made the wrong answers believable, so in a good question, the incorrect option is almost right, not obviously wrong.

Our honest benchmark: if you're consistently scoring above 750 on our practice exams, you're ready. If you're around 700–730, you'll probably pass but you have identifiable weak areas worth addressing first. Below 700, keep studying — don't book the exam yet.

AI-900 retake policy

If you don't pass the first time, it's not the end. However, it's important to know the retake rules before you take the exam, not after.

- First retake: you can rebook after 24 hours

- Second and subsequent retakes: 14-day waiting period between each attempt

- Maximum attempts: 5 within any 12-month period

- After passing: you cannot retake to improve your score

The 14-day waiting period can actually help if you use it well. Review your score report, find out which domains you struggled with, and use that time to work on those areas instead of just retaking the exam with the same preparation. Candidates who retake the exam without changing their approach rarely see different results.

How does the AI-900 compare to other foundational AI exams?

| Certification | Provider | Focus | Best suited for |

|---|---|---|---|

| Azure AI Fundamentals (AI-900) | Microsoft | Foundational AI concepts + Azure AI services | Anyone working in or planning to work in the Microsoft/Azure ecosystem |

| AWS Certified AI Practitioner (AIF-C01) | Amazon Web Services | Foundational AI/ML + AWS AI services | Those focused on the AWS ecosystem |

| Google Cloud Digital Leader | Google Cloud | Cloud fundamentals + Google Cloud products, strong on AI/data | Business professionals new to cloud |

| CompTIA Cloud Essentials+ | CompTIA | Vendor-neutral cloud fundamentals | Those wanting platform-agnostic knowledge |

If your organization uses Microsoft infrastructure like Microsoft 365, Dynamics, Power Platform, or Azure, the AI-900 is the best place to start. The knowledge you gain applies directly to tools you probably already use or will use soon.

If you truly can't decide between AWS and Azure, look at which platform is more common in your industry. Both certifications are respected, but the key is to choose the one you'll actually use in your job.

Read more: Microsoft AI-900 vs. AWS AIF-C01: The 2026 Comparison

Recommended study plan

Most candidates can prepare well in two to three weeks if they focus. Here's how we suggest you organize your study time.

Week 1 — Core concepts

Days 1–2: AI workloads and responsible AI

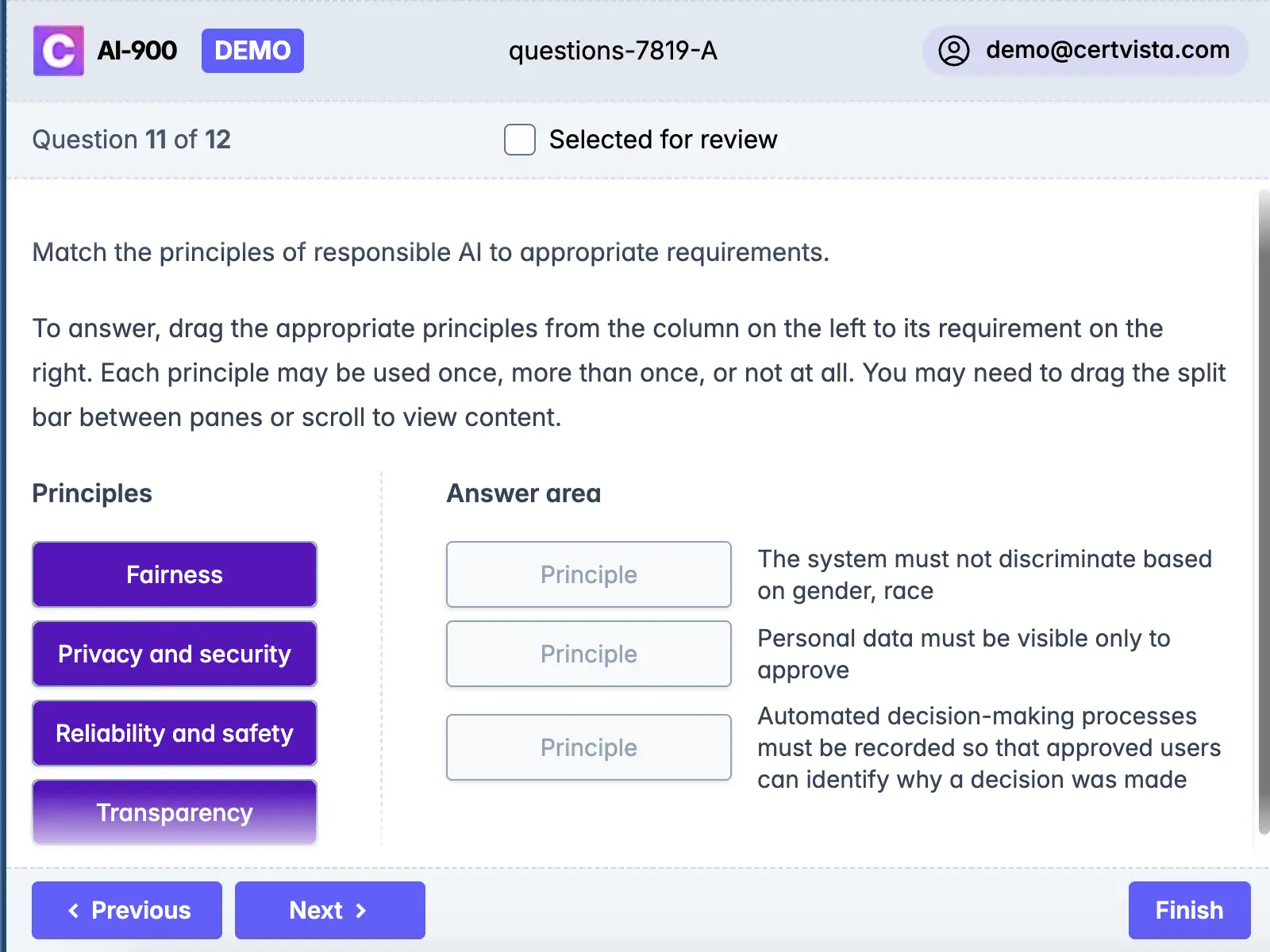

Begin with the Microsoft Learn modules on AI workloads. Don't skip the responsible AI section; read it carefully and test yourself on the differences between the principles. For example, know how to tell fairness from transparency, and accountability from reliability, especially in real scenarios, not just by their definitions.

Days 3–4: Machine learning fundamentals

Work through the ML modules, focusing on when each technique applies. If you can access Azure's free tier, run a quick automated ML experiment — even a toy one. Understanding what "training data", "features", and "labels" look like in an actual interface makes the exam questions significantly easier to reason about.

Days 5–7: Computer vision and NLP

For each service and feature, ask yourself: what is this specifically for, and what would be the most tempting wrong alternative? That's the question the exam will ask. Run through the Azure AI Vision and Language demos if you can — seeing OCR and sentiment analysis in action is worth more than reading about them.

Week 2 — Generative AI and practice

Days 8–10: Generative AI on Azure

Spend more time on this domain than the 20–25% weighting suggests, since it covers the most material and has the newest updates. Go through Azure OpenAI Service, Azure AI Foundry, RAG, prompt engineering, and model families in a systematic way. If you can, spend time in the Azure OpenAI playground. Questions in this domain often depend on knowing specific details.

Days 11–14: Practice exams and closing gaps

Take a full-time practice exam. Then, and this is the step most candidates skip, read every explanation—even for the questions you got right. Use your domain breakdown to decide where to focus for the rest of your study time. Take another full practice exam near the end of the week.

Week 3 - If you need it

If you consistently score 750 or higher on practice exams, you're ready. If not, spend a third week focusing on your weak areas instead of reviewing everything. Take one more timed exam before you book the real test.

The mistakes we see candidates make

After training thousands of students, certain patterns come up again and again. These are the ones that cost candidates who had otherwise passed the work.

Treating responsible AI as background reading

The responsible AI domain may seem like soft content—principles, ethics, and high-level ideas. Many candidates skim it, memorize the six principal names, and think that's enough. But the exam will present a scenario in which the right answer depends on knowing exactly what "transparency" means in AI, compared to "accountability." These questions require real understanding, not just recognition. Give this domain as much attention as you would computer vision or NLP.

Memorising the name, not the use case

"Which Azure service would you use to extract key phrases from customer feedback?" isn't just a memory test; it's about matching the right service to the use case. If you've only learned the service names and a short description, you'll get the question right until Microsoft makes the scenario a bit more ambiguous. Know what each service does, when to use it instead of a similar one, and what its limitations are.

The AI-900 has changed a lot, especially in the generative AI domain. Brain dumps are never reliable, and after an exam update, they can actually hurt your chances because they focus on questions that are no longer used and leave you unprepared for new ones. Always use up-to-date, official practice materials.

The AI-900 has been significantly updated, particularly in the generative AI domain. Brain dumps are unreliable at the best of times and become actively harmful after an exam update because they train you on questions that no longer appear while leaving you underprepared for ones that do. Use current, maintained practice materials.

Skipping hands-on practice

The exam is all about scenarios, and they're much easier if you've seen real examples. Spending an hour in the Azure portal—running an automated ML job, testing the Vision API, or exploring the OpenAI playground—is more valuable than two hours of reading. Use Azure's free tier; it doesn't cost anything.

Treating a practice exam as a score check

A practice exam score shows you your current level. Reviewing the explanations tells you how to improve. If you just take a practice exam, check your score, and move on, you're missing out on half the benefit. Spend as much time reviewing explanations as you do taking the test.

Not practising under timed conditions

The AI-900 isn't a brutal time crunch, but candidates who have never experienced exam-pace pressure sometimes rush at the end. Take at least two of your practice exams with the timer running and no interruptions. Get used to the pace.

Why pursue the AI-900?

It doesn't expire. Unlike most Microsoft role-based certifications, which require annual renewal, the Azure AI Fundamentals credential is permanent. You earn it once.

It's a real foundation for AI-102. The Azure AI Engineer Associate certification focuses on designing and building AI solutions at a professional level. The AI-900 is the quickest way to prepare for that exam because the basic concepts carry over directly. Candidates who have completed AI-900 usually need less time to get ready for AI-102 than those starting from scratch.

AI literacy is now expected. In organizations of any size that use Microsoft technology, being able to talk knowledgeably about what AI can and can't do is becoming a basic requirement, not just a specialist skill. Having a recognized certification is the clearest way to show you have that knowledge.

The knowledge is useful beyond the exam. Responsible AI, choosing workloads, and understanding the trade-offs between different approaches are not just exam topics. If you work with AI in any way, you'll use these ideas.

Exam changelog

| Date | Change |

|---|---|

| May 2025 | Generative AI domain weighting raised; Azure AI Foundry added as testable topic; OpenAI model coverage expanded to include Whisper and Embeddings; RAG scenarios introduced |

| 2024 | Responsible AI content updated to reflect Microsoft RAI Standard v2; some legacy cognitive services questions retired |

| 2023 | Generative AI added as a standalone domain; exam restructured from 4 to 5 domains |

We update our question bank within 30 days of any official Microsoft exam change. If you notice a discrepancy between our content and current Microsoft documentation, contact us and we'll review it immediately.

Final take

The AI-900 is within reach for almost anyone who prepares well. Two to three weeks of structured study, hands-on practice with the services, and quality practice exams with thorough explanation review is the formula for success. We've seen this approach work for people with no technical background and for experienced engineers looking to formalize their AI knowledge.

What doesn't work is last-minute cramming, using brain dumps, memorizing service names, or skipping the generative AI domain just because it's new. We've seen all of these strategies lead to failure, even for candidates who thought they were ready.

If you prepare well, the AI-900 is truly achievable. Our practice exams are made to ensure that when you take the real test, nothing will catch you off guard.

Content maintained by the CertVista training team. Last reviewed: March 2026.

Sample AI-900 questions

Get a taste of the Azure AI Fundamentals exam with our carefully curated sample questions below. These questions mirror the actual AI-900 exam's style, complexity, and subject matter, giving you a realistic preview of what to expect. Each question comes with comprehensive explanations, relevant documentation references, and valuable test-taking strategies from our expert instructors.

While these sample questions provide excellent study material, we encourage you to try our free demo for the complete AI-900 exam preparation experience. The demo features our state-of-the-art test engine that simulates the real exam environment, helping you build confidence and familiarity with the exam format. You'll experience timed testing, question marking, and review capabilities – just like the actual certification exam.

You run a charity event that involves posting photos of people wearing sunglasses on Twitter.

You need to ensure that you only retweet photos that meet the following requirements:

- Include one or more faces.

- Contain at least one person wearing sunglasses.

What should you use to analyze the images?

the Detect operation in the Face service

the Analyze Image operation in the Computer Vision service

the Describe Image operation in the Computer Vision service

the Verify operation in the Face service

To solve this scenario, you need to analyze photos for the presence of faces and check if any faces have sunglasses. The Computer Vision service offers the Analyze Image operation, which can detect various visual features in images, including objects and facial accessories like sunglasses.

The Detect operation in the Face service identifies faces and returns face attributes, but to specifically determine if sunglasses are being worn, the Analyze Image operation in the Computer Vision service is more appropriate. It provides information about objects, facial accessories, and activities happening in the image.

The Describe Image operation generates a textual description, but it may not always list all relevant visual details or detect individual accessories reliably.

The Verify operation in the Face service is intended for face comparison and verification scenarios (for example, determining if two faces belong to the same person), not for analyzing image content for sunglasses.

Therefore, the Analyze Image operation in the Computer Vision service is the best choice to meet both requirements: detecting faces and identifying sunglasses.

Pay careful attention to the scenario's requirements and match them to the specific capabilities of Azure AI services: Analyze Image is broader and more aligned with detecting multiple content features such as faces and accessories.

You are building an AI system.

Which task should you include to ensure that the service meets the Microsoft transparency principle for responsible AI?

Ensure that all visuals have an associated text that can be read by a screen reader.

Ensure that a training dataset is representative of the population.

Provide documentation to help developers debug code.

Enable autoscaling to ensure that a service scales based on demand.

The correct answer is to provide documentation to help developers debug code. This aligns with the Microsoft transparency principle for responsible AI, which emphasizes that AI systems should be understandable, and users should have access to information about how the system operates. Proper documentation, especially that which aids in debugging and understanding how the AI makes decisions, directly supports transparency by making the system's functions, limitations, and behaviors clear to those developing, deploying, or using the solution.

The option to ensure all visuals have text for screen readers relates more to accessibility than transparency. Making sure a dataset is representative addresses the fairness principle, which focuses on minimizing bias. Enabling autoscaling is related to service reliability and scalability but does not impact transparency about how the AI system works.

Transparency is about providing sufficient information about AI systems so users and stakeholders can understand how and why decisions are made.

Focus on the core of the transparency principle: ensuring that users and developers can access explanations and documentation for how the AI system operates, its decision-making process, and its limitations.

Your company wants to build a recycling machine for bottles. The recycling machine must automatically identify bottles of the correct shape and reject all other items.

Which type of AI workload should the company use?

Natural language processing

Anomaly detection

Computer vision

Conversational AI

For this scenario, the company requires a system that can automatically identify bottles based on their shape, which involves analyzing images to distinguish between bottles and other objects. This type of task is best addressed with computer vision.

Computer vision enables machines to interpret and process visual data from the world, such as identifying objects in images or videos. In this context, a camera or sensor could be used to capture images of items placed in the recycling machine, and a computer vision model can determine whether the item matches the desired bottle shape, automating the sorting process effectively.

Natural language processing is focused on text and speech understanding, not image analysis. Anomaly detection identifies unusual patterns or data outliers, but it is not used for recognizing physical shapes in images. Conversational AI is used for building chatbots and virtual agents, not for processing visual input.

Focus on the input type described in the scenario: identifying shapes visually signals use of computer vision. Be cautious with distractions such as anomaly detection, which might sound relevant but are not about visual object classification.

You are developing a solution that uses the Text Analytics service. You need to identify the main talking points in a collection of documents.

Which type of natural language processing should you use?

Language detection

Key phrase extraction

Entity recognition

Sentiment analysis

To identify the main talking points in a collection of documents, you should use key phrase extraction. Key phrase extraction is a natural language processing (NLP) technique that automatically identifies the most important words and phrases in text data, helping to summarize the central topics discussed. The Azure Text Analytics service provides a dedicated API for key phrase extraction, which scans the document and returns a list of key terms and phrases that represent the main talking points.

Language detection is used to determine the language of the text, not its main points. Entity recognition focuses on identifying specific entities such as people, organizations, or locations, not general topics. Sentiment analysis determines the emotional tone (positive, negative, or neutral) of the text, not its subject.

On the exam, look for verbs like "identify topics," "main points," or "summarize content"—these often refer to key phrase extraction. If the question refers to identifying people, places, or things, think of entity recognition instead.

You build a machine learning model by using the automated machine learning user interface (UI).

You need to ensure that the model meets the Microsoft transparency principle for responsible AI.

What should you do?

Set Primary metric to accuracy.

Enable Explain best model.

Set Max concurrent iterations to 0.

Set Validation type to Auto.

To meet the Microsoft transparency principle for responsible AI, you need to ensure that the chosen machine learning model can be explained and its decisions understood. In Azure Machine Learning's automated machine learning UI, enabling the Explain best model feature provides model interpretability features, which help you understand why the best model made specific predictions. This aligns with the transparency principle, which focuses on making AI systems and their decisions as open and understandable as possible.

Setting the primary metric to accuracy, choosing max concurrent iterations, or changing the validation type affects model performance but does not address transparency or interpretability. Only enabling model explanation directly supports this responsible AI principle.

When asked about responsible or ethical AI principles such as transparency, focus on features that help users understand, explain, or audit the model (for example, model explanation, feature importance). Accuracy and training-related settings do not address ethical principles directly.

Which scenario is an example of a webchat bot?

From a website interface, answer common questions about scheduled events and ticket purchases for a music festival.

Translate into English questions entered by customers at a kiosk so that the appropriate person can call the customers back.

Determine whether reviews entered on a website for a concert are positive or negative, and then add a thumbs up or thumbs down emoji to the reviews.

Accept questions through email, and then route the email messages to the correct person based on the content of the message.

The best example of a webchat bot among the scenarios described is a system that, from a website interface, answers common questions about scheduled events and ticket purchases for a music festival. A webchat bot is designed to interact with users through a chat interface embedded in a web application, providing automated responses and conversational support.

The other options describe the use of different AI capabilities: translating kiosk queries, performing sentiment analysis on reviews, and email content routing. None of these involve a webchat interface or conversational UI designed to answer user questions interactively within a web context.

On the AI-900 exam, differentiate solution scenarios by identifying the interaction mode. Webchat bots always involve a chat interface, usually accessible from a browser, and provide automated conversational responses. If a scenario mentions chat, conversation, and a website interface, it likely relates to webchat bots.

You need to create a clustering model and evaluate the model by using Azure Machine Learning designer.

What should you do?

Split the original dataset into a dataset for training and a dataset for testing. Use the testing dataset for evaluation.

Use the original dataset for training and evaluation.

Split the original dataset into a dataset for training and a dataset for testing. Use the training dataset for evaluation.

Split the original dataset into a dataset for features and a dataset for labels. Use the features dataset for evaluation.

When building a clustering model in Azure Machine Learning designer, you should split the original dataset into two subsets: one for training the model and one for testing. After training your clustering model on the training data, you evaluate the model using the separate testing dataset. This approach ensures an unbiased evaluation of the model's performance and helps in assessing how well the model generalizes to new, unseen data. Using the testing dataset for evaluation is a standard best practice in machine learning to avoid overfitting and to get an accurate measurement of model performance.

Using the original dataset for both training and evaluation can lead to overly optimistic results that don't reflect true model accuracy (due to data leakage). Evaluating on the training set doesn't give you information about the model's performance on unseen data. Lastly, clustering is an unsupervised task, so splitting into features and labels isn't applicable, as there are generally no labels in clustering tasks.

For questions about model evaluation, always remember to use a testing/validation dataset that was not seen by the model during training. Look out for hints on appropriate dataset splitting for unbiased evaluation.

When you design an AI system to assess whether loans should be approved, the factors used to make the decision should be explainable.

This is an example of which Microsoft guiding principle for responsible AI?

Fairness

Privacy and security

Transparency

Inclusiveness

In this scenario, you are designing an AI system that assesses loan applications. Ensuring that the factors and logic behind approval decisions are explainable refers to the responsible AI principle of transparency.

Transparency emphasizes that AI systems should provide intelligible explanations for their actions and decisions. This allows users and stakeholders to understand how outcomes—such as loan approvals or denials—are determined, thereby fostering trust and accountability.

Fairness deals with preventing discrimination and ensuring that the AI system treats all individuals equitably.

Privacy and security focus on safeguarding user data and respecting confidentiality. Inclusiveness ensures that AI systems are designed for a broad set of users, including those with disabilities.

Transparency, specifically, is about being able to clearly communicate how and why the AI system made its decisions, which is crucial in scenarios such as loan approvals where individuals may be significantly impacted.

To answer similar questions, focus on key words in the scenario. If the scenario asks about understanding, explaining, or making AI decisions clear to stakeholders, it's pointing to the transparency principle.

Related exams

Frequently Asked Questions

Yes - with one caveat. It's worth it if you're working in or moving toward the Microsoft ecosystem, or if you want a structured foundation before tackling role-based certifications like AI-102. It's less valuable as a standalone credential if your organisation runs primarily on AWS or Google Cloud. The fact that it doesn't expire makes the risk low: you earn it once and it stays on your profile permanently.

Two to three weeks of focused study is realistic for most candidates. That assumes roughly an hour or two per day, working through the official Microsoft Learn path, getting some hands-on time with Azure's free tier, and taking practice exams with proper explanation review. Candidates who try to cram it into a weekend almost always underperform on the scenario-based questions — those require genuine understanding, not last-minute memorisation.

Yes. We've seen candidates from entirely non-technical backgrounds — HR, finance, marketing — pass comfortably with the right preparation. The exam tests conceptual understanding, not coding ability or mathematics. What matters is that you engage with the material rather than just memorise it. Scenario-based questions test whether you understand what AI services do in context, and that's learnable regardless of background.

AZ-900 (Azure Fundamentals) covers cloud computing concepts broadly — infrastructure, storage, networking, pricing, and Azure services in general. AI-900 goes deep on one specific area: artificial intelligence and machine learning on Azure. If you're interested in AI specifically, start with AI-900. If you want a broader Azure foundation first, AZ-900 makes sense before moving to AI-900 or any role-based certification. Some candidates do both; they complement each other well.

AI-900 is foundational — it tests whether you understand AI concepts and Azure AI services at a conceptual level. AI-102 (Azure AI Engineer Associate) is a professional role-based certification that tests whether you can actually design and implement AI solutions. AI-900 is the right starting point; AI-102 is where you go next if you're working as or moving into an AI engineering role. The concepts from AI-900 carry over directly, which is why AI-900 alumni consistently need less preparation time for AI-102 than candidates who go in cold.

No - and for AI-900 specifically, they're particularly unreliable. The exam has been updated significantly in recent cycles, especially the generative AI domain. Brain dumps lag behind exam updates and will train you on questions that no longer appear while leaving you unprepared for content that does. More fundamentally, the AI-900's scenario-based format means you can't pass by recognising question patterns — you need to understand the underlying concepts. Candidates who rely on brain dumps tend to fail on scenarios they've never seen before. Use current, maintained practice materials instead.